Please find below the transcript:

Jeremie (00:00):

Hey, everyone. Jeremie here. Welcome back to the podcast. I’m really excited about today’s episode because we’ll be talking about how responsible AI is done at Facebook. Now, Facebook routinely deploys recommender systems and predictive models that affect the lives of literally billions of people every day. And with that kind of reach comes huge responsibility, among other things, the responsibility to develop AI tools that are ethical and fair, as well as well characterized.

Jeremie (00:22):

This really isn’t an easy task. Human beings have spent thousands of years arguing about what fairness and ethics even mean, and we haven’t come to anything close to consensus on these issues. And that is exactly why the Responsible AI community has to involve as many disparate perspectives as possible when they’re determining what policies to explore and recommend. And that’s a practice that Facebook’s responsible AI team has applied itself.

Jeremie (00:44):

Now, for this episode of the podcast, I’m joined by Joaquin Quinoñero-Candela, the distinguished tech lead for Responsible AI at Facebook. Joaquin’s been at the forefront of AI ethics and the AI Fairness Movement for years. And he’s overseen the formation of Facebook’s entire Responsible AI team basically from scratch. So, he’s one of relatively few people with hands-on experience making critical AI ethics decisions at scale and seeing their effects play out.

Jeremie (01:09):

Now, our conversation is going to cover a whole lot of ground from philosophical questions about the definition of fairness itself, to practical challenges that arise when implementing certain AI ethics frameworks. This is a great one. I’m so excited to be able to share this episode with you, and I hope you enjoy it as well. Joaquin, well, thanks so much for joining me for the podcast.

Joaquin (01:26):

Thank you, Jeremie. Thank you for having me. It’s a great pleasure and I absolutely love your podcast.

Jeremie (01:32):

Well, thanks so much. It’s a thrill to have you here. I’m really excited for this conversation. There’s so much that we can discuss, but I think there’s a situational piece that’ll be useful to tee things up. And that’s a short conversation about your background, how you got into this space, how somebody just came in from outside of ML and academic journey and then eventually came to the position that you’re in, which is leading up the Responsible AI initiative at Facebook. How did you get there?

Joaquin (01:56):

We have to have a deal where you can interrupt me whenever you want because once I get talking about my journey, I can go long. I think at a very, very high level, I finished my PhD in machine learning in 2004. At the time, very few people used the word AI in the community that I was in. The NeurIPS community was mostly an ML community. I had a little stint of being an academic. I was a postdoc at the Max Planck Institute in Germany. My first transition was I joined Microsoft Research in Cambridge in the UK in January 2007 as a research scientist.

Joaquin (02:43):

And so what happened there was a very fundamental thing in my career, which is that I came across product teams that were using machine learning. In particular, we started to talk to the team that would become the Bing organization, so Microsoft’s search engine, before it launched. And we started to apply ML to increase the relevance of ads for people. And specifically, we were building models that would predict whether someone would click on an ad if they were to see that ad. That work really got me hooked to the idea that ML was not just something that you did in scientific papers, but it was something that you could actually put in production.

Joaquin (03:24):

So, in 2009, the Bing organization asked me, “Hey, why don’t you leave the comfort of Microsoft Research, that comfortable sort of research environment, and why don’t you become an engineering manager and lead a team within Bing?” So, I did just that. It was probably one of the most stressful and dramatic transitions in my life because I knew nothing about being an engineering leader. I wasn’t used to doing roadmapping, headcount planning, budgeting, being on call, being responsible for key production components. So, it was pretty stressful. But at the same time, it was wonderful because it gave me the view of the other side.

Joaquin (04:04):

And one theme that has been recurring in my whole career and still is now is this idea of technology transfer. The funnel that goes from a whiteboard and some math, some idea, some research, some experiment, all the way to a production system that needs to be maintained and running.

Joaquin (04:22):

And so this transition gave me a first person view into that other world on the other side of research, that other world where sometimes as a researcher, you’d go in and you’d say, “Hey, you all should consider using this cool model I just built,” and then people would go like, “I don’t have time for this. I’m busy.” So, now all of a sudden, I was on the other side. It was a great experience, ended up also helping to build the auction optimization team as well. So, that got me to learn a little bit about mechanism design and economics and auctions.

Joaquin (04:57):

And then I came to Facebook in May 2012. I joined the Ads organization. I started to build out the machine learning team for Ads. And then I think the second big moment in my career happened, which is that, I think the phrase I would tell everyone is it just hit me really hard that wizards don’t scale.

Joaquin (05:16):

So, the challenge we had is we had a few brilliant machine learning researchers at Facebook, but the number of problems we needed to solve just kept increasing. And we were almost gated by our own ability to move fast. People would be building models in their own box, having their own process for shipping them to production, and I felt the whole thing was slow. And the obsession became, how can we accelerate research to production? How can we build the factory?

Joaquin (05:51):

And that was an interesting time because all of a sudden, the difficult decision there was to say, well, rather than rushing to build the most complicated neural network for ranking and recommendations possible, let’s just stick to the technology we have, which is reasonable. We were using boosted decision trees and we were using online linear models and things like that, which are relatively simple. At large-scale, that’s maybe not that simple. But the idea was, can we ship every week? Can we go from taking many weeks from-

Jeremie (06:26):

Wow.

Joaquin (06:26):

Yeah, that was the vision. The vision was ship every week. It became almost like the mantra. Everyone was like, “Ship every week.” And so that led us to build the entire ecosystem for managing the path from research to production. And it did many things. It’s a set of tools that we built across the company. It’s interesting because it was focused on ads only. The idea was that it would be very easy for anyone in ads building any kind of model to predict any kind of event on any kind of surface to actually share code, share ideas, share experimental results.

Joaquin (07:00):

Almost also actually to know who to talk to. Actually, a big thing is to build a community. The vision was for this to be agnostic to the framework you actually express your models in. When TensorFlow and Keras became popular, we supported that of course. We support PyTorch. But if you really want to write stuff from scratch in C++, be our guest. It’s pretty agnostic. It’s all about managing workflows.

Joaquin (07:28):

And what ended up happening is that teams started to ask to adopt what we were building. And fast forward in time, what happened is that the entire company adopted what we built. We started to add specialized services on top for computer vision, for NLP, for speech, for recommendations, machine translation, basically everything. And that led to the creation of a team called Applied ML, which I helped build and led for a few years, which essentially just provided for the entire company and democratized ML and put it into the hands of all product teams.

Joaquin (08:06):

The idea was that since wizards don’t scale, you don’t need that many wizards, that the wizards will be the creators of fundamental innovation, but then you can have an ecosystem of hundreds, if not thousands of engineers across a company who can very easily leverage and build on those things. So, I did that until three years ago essentially. And I’ll take a breath here in case you want to ask me any question about this part before we transition into, I guess, what would become then the third big moment, which is the transition to Responsible AI.

Jeremie (08:41):

One of the big take-homes for me in this, especially with the second phase of your career, is you’re talking about implementing processes, like getting really, really good at implementing processes. And it seems to me, anytime people talk about AI ethics, AI fairness, that sort of thing, that this is the thing that gives me chills or makes me a little bit nervous about the whole space, is the apparent lack of consistency.

Jeremie (09:04):

It’s hard to know what AI fairness is, what AI ethics is. It seems like everyone has a different definition. And it’s also unclear to me how, even once we have those definitions, we can then codify them into processes that are replicable and scalable. So, that expertise must’ve been critical, right? I mean, that process development flow, that’s going to map onto the fairness in AI work?

Joaquin (09:27):

It does. Now, you hit the nail on the head. It’s interesting because a lot of the things we did when we were democratizing AI inside the company are being very helpful now that we’re working on Responsible AI, although maybe that was not the first idea we had in mind. But the idea that right now we have the tooling that allows us to see what are all of the models that are deployed in production across Facebook and who’s built them and what went into them turns out to be to give you this level of obstruction and consistency, which is really important. But of course, there’s a lot more to Responsible AI. Like you said, there is broad disagreement on definitions of concepts. So, maybe let me tell you about my own journey into that world.

Joaquin (10:23):

I had been following with the corner of my eye the Fairness, Accountability, and Transparency Workshop. I believe the first year it took place was probably 2014 or something like that at NeurIPS, and then it kept recurring. And then I believe 2018 might be the year where it spun out. And in fact, I attended that workshop. It became its own sort of separate conference and it took place, 2018 it was in New York.

Joaquin (10:53):

But just before that end of 2017 at the New York’s conference, there were a couple of fundamental keynotes by people like Kate Crawford or Solon Barocas and many others. And it just became obvious to me, like my whole brain exploded in a way and I thought, the time is now. It’s obvious the time is now. We need to put the food on the gas here. We need to go all in. We need to go from initial efforts to really, really build a big dedicated effort that focuses on Responsible AI.

Joaquin (11:27):

And then with my background as someone who loves math and as someone who loves engineering and as someone who loves processes and platforms and giving people tools, I sort of, okay, well, I think we can figure out algorithmic bias out in a couple of months [crosstalk 00:11:42].

Jeremie (11:43):

It can’t be that hard.

Joaquin (11:44):

Yeah, it can’t be that hard. How hard can it be? I thought, let’s take a look here. So, I looked at some of the work, some of the definitions. And then of course, immediately it became clear. This is work by Arvind Narayanan, who gives this beautiful talk, 21 definitions of fairness and their politics. There’s a talk he gave about that in 2018. At the time it was called a FAT Star conference. Now it’s called FACT. [inaudible 00:12:11] community were great at coming up with terrible names like [NIPS 00:12:14] and FAT. And then luckily we renamed them.

Jeremie (12:18):

Move fast and break things.

Joaquin (12:19):

Move fast and break things. So, I thought, okay, well, that’s fine. Out of these 21 definitions, some of the definitions of fairness try to equalize outcomes between groups, some of the definitions of fairness try to focus on treating everyone the same, and I’m like, okay, how many do we really need? And it’s like, maybe we can implement a couple. I imagine like a drop down box like, okay, which one do I pick as a [crosstalk 00:12:44] practitioner? I thought we can have beautiful visualizations of data composition broken down by subgroup, accuracy of your model, calibration curves, all these things. I’m like, okay, we got this we, we can get this done in a couple of months.

Joaquin (12:59):

Then it hit me like a ton of bricks that AI fairness is not primarily an AI problem, and that math does not have the answer. And that it’s extremely context dependent what definition of fairness you should use. That you need to build multi-disciplinary teams that involve people who come from moral and political philosophy. That’s actually extremely important. And probably the most important one of all is that fairness is not a property of a model. It’s not a status box. It’s actually a process. Fairness is a process. In fact, I’m going to tilt the camera a little bit and show you … I intentionally am wearing this T-shirt we made for the team that says, fairness is a process.

Jeremie (13:45):

Ah, very nice.

Joaquin (13:46):

So, this is a set of T-shirts we built for the team because I kept repeating this all the time. And so our executive assistant for the team one day came up with this pile of T-shirts for everybody and says, “Okay, you all keep saying all the time fairness is a process [inaudible 00:14:03].” So anyway, we wear them with pride. You’re never done. The thing that maybe is a little bit hard for an engineer to think about is that this is not like, oh, I’m going to build this microphone here and it’s done. I’ve tested it, it works. It’s not like that.

Joaquin (14:20):

And if you go back in time, I guess humanity has been discussing fairness since Aristotle. And we’re still discussing, and we don’t agree. And it becomes political as it should because we have different ideologies. So, you need to build this processes that are multidisciplinary that involve risk assessments, surfacing the decisions, documenting how you make them and all of that. And that’s only for fairness. Obviously, in Responsible AI you have many other dimensions to tackle as well.

Jeremie (14:54):

I love the idea of fairness as a process partly because, well, there’s this well-known principle called Goodhart’s law. As soon as you define a metric, you say, we’re going to optimize this one number, then all of a sudden people find ways around it, they find hacks, they find cheats. I’m arguably seeing that with the stock market today.

Jeremie (15:12):

The time was back in 1950 as a general rule, if the stock market went up, people’s quality of life went up. But now we’re seeing a decoupling as people start to play games and politics and optimize around it. So, this seems like … I mean, is this part of the strategy is recognizing the fact that there’s no single loss function, no single optimization function to improve and instead look like let’s focus on the process?

Joaquin (15:35):

Absolutely. I have several things I’d like to say about this. First of all, every single metric is imperfect. Even if you’re not working on fairness specifically, the moment you set a metric goal, and in particular, I think one of the reasons that many technology companies are very successful is that I think we can iterate fast, we can be very quantitative, we can be metrics-driven, and we can move those metrics.

Joaquin (16:11):

But that at the same time is also potentially our Achilles heel that is what can actually get you into trouble. So, it’s essential to develop very strong critical thinking. And it’s essential to develop what we call counter metrics, like other metrics that work in a position which you should not make worse as you make this one metric better. But even that is-

Jeremie (16:36):

[crosstalk 00:16:36] an example of one of those actually? That sounds like a fascinating idea.

Joaquin (16:39):

A simple idea would be, imagine that you are trying to improve the accuracy of a speech recognition system and you’re measuring it by word error rate or something like that, whatever you want. So, one counter metric you could build if you cared about fairness is you could actually break down, you could desegregate your metric, say by, in the U.S. you could do this by some geographical grouping since accents vary across the country. And then your counter metric could be that you cannot decrease performance for any group because averages will always get at you, right?

Jeremie (17:21):

Yeah.

Joaquin (17:21):

You can make things twice better for the majority group, you can make them worse for another group, and you might not even realize. So, that’d be a very fairness centric metric. But you could imagine many other scenarios where you’re trying to optimize many aspects of an experience, and there are certain things that you don’t want to make worse.

Jeremie (17:51):

I think one of the first comments you made about this was fairness or definitions of fairness are not a challenge for the data scientists.

Joaquin (17:58):

Right.

Jeremie (17:58):

That’s not something they should have to worry about, which makes total sense. Data scientists don’t have time to get philosophy degrees and degrees in public policy. So, it’s going to be someone’s job ultimately. And whether that’s a policy maker or somebody with more of a liberal arts background, one question that comes to mind is the character of conversations around these metrics gets really deeply technical really fast. And I imagine just being able to communicate the nature of the decisions being made, that’s its own challenge. Do you see that coming up a lot, and are there any strategies that you use to address that challenge?

Joaquin (18:32):

It’s a challenge. One of the things we’ve been having success with is to define a consistent vocabulary for talking about fairness across the company and also a consistent set of questions that are increasing in their complexity to make this very concrete and illustrated with examples. One of the most fundamental definitions of fairness you can have is to ask the question, does my product work well for all groups? The question of defining groups is very context dependent as well of course.

Joaquin (19:18):

To my left I have our Facebook Portal camera, and it has a smart AI that is capable of tracking people. If I’m video conferencing with my parents in Spain or my parents in [inaudible 00:19:36], Germany, which is basically now you can imagine how our weekends go with our kids or we’re videoconferencing with them, we’ve actually given them some of those devices too because if you have your phone that you’re using to Skype or to FaceTime or to use whatever software you use, it’s really annoying to try to keep the frame centered and so on.

Joaquin (19:56):

And so with Portal it’s magic, but the AI that tracks people and that sees if you have two people to zoom it out, crop, and center and everything, you can’t take for granted that it’s going to work equally well across skin tones or genders or ages. In that context, you can say, well, how do I define a minimum quality of service? And a minimum quality of service is going to be that the failure rate where someone gets cropped out or something has to be smaller than a certain small percent of the time or of the number of frames or sessions or something.

Joaquin (20:34):

And that’s a reasonable concept, this idea of minimum quality of service. I want my product to work well enough for everybody. Of course, within the context of my product, one of the key questions is, hey, given what you’re trying to build, who are the most sensitive groups you should consider? Later on, if you want, I’ll put something in the topic jar, which is we can talk about election interference. And we can talk about how AI can help in that context. And we can talk about the India elections. And we can then reason about what groups are relevant in that context.

Joaquin (21:12):

But back to the Portal example, the most basic set of questions about fairness would be, hey, can you desegregate your metrics? Teams have typically launched criteria. So the data science, the ML team will say, “Hey, is this model good enough?” Well, the question is, instead of having a roll-up metric desegregated by group, in this case, it could be skin tone, there’s many scales you can use for this, age and gender, and then for each of those buckets, look at the bucket for whom the performance is worst and imagine that that was the only population you had, would you still launch?

Joaquin (21:50):

If the answer is no, then maybe you need to sit back and figure out, okay, how do I make sure that I’m not leaving anybody behind. Then leading to the India elections, there is one set of questions and one definition of fairness that is more comparative in nature. In a way, minimum quality of service compares against the bar, but it doesn’t necessarily then worry about, oh, it may work still a bit better for a group than another. It’s fine as long as it works well enough for everybody. And I’ll put another topic in the topic jar, which is Goodhart’s law and how even you can game or screw up your minimum quality of service as well.

Joaquin (22:30):

But the next level up would be equality or equality of treatment. You could say, okay, well, I actually care about differences in performance. And the India elections example goes like this. Last year around April, May, if I remember correctly, we had the biggest elections in the history of humankind. I think it was close to a billion registered voters or something along those lines. A little bit less than that, but massive. And in a time where there are many concerns of misinformation and attempts of manipulation.

Joaquin (23:08):

The way we would tackle this without AI would be to have humans who are given standards, community guidelines, content policies, and who check that content doesn’t violate those. And if it does, you take them down. But the problem is at the scale of this election and at the volume we have, there’s no number of humans you can hire to do this. So then what you need to do is you need to prioritize the work because obviously, my post about me playing the guitar or featuring my cat or my dog, we shouldn’t waste time reviewing those.

Joaquin (23:47):

But if there are issues that politicians are discussing, or even if it’s not politicians, organizations are talking about civic content, content that discusses social or political issues, then we should prioritize those. So, we built an AI that does just that. We call it the civic classifier. So, what it does is it sifts through content and it identifies content that is likely to be civic in nature, and then prioritize that for review by humans.

Joaquin (24:20):

What’s the fairness problem? Well, the fairness problem is that in India, you have, I think it’s over 20 official languages, and there are many regions that have very different cultural characteristics. So really, if you break down your population into regions and languages, you can see how those actually correlate with things like religion or caste. And the social issues that concern people in different regions are different. But now you have an AI that’s prioritizing where we put those human resources to review content. What happens if that AI only works well for a couple of languages and it doesn’t work for others?

Joaquin (25:01):

So, if you take the language analogy, what happens if it overestimates risk for a language? Meaning we would prioritize … we’d put more human resources to review posts in that language. And if you under predicts or underestimates the likelihood that something is civic in nature for another language, then we’re not allocating enough human resources there.

Joaquin (25:22):

And then we decided not to stop by minimum quality of service, we thought, this is a case where we really want equal treatment. And since what we have here is a binary classifier that outputs a number between zero and one that says, what’s the probability that this piece of content is civic, then what we said is well, we want those predictions to be well calibrated for every single language and every single region.

Joaquin (25:47):

So again, the notion of fairness there, we set a higher bar because this was about fairness of allocation of resources. And then just to complete the picture, the third common definition and sets of questions associated that we’re also using internally is around equity and is around asking, not just should we treat everybody and everything the same, or should the performance of the system be above a certain bar for everybody? The question then becomes, is there any group of people, for example, for whom the product outcomes deserve special attention? And this can be because there might be some historical context that we should take into account.

Joaquin (26:42):

And one example we’ve seen in 2020 in the wake of racial justice awareness in the U.S. has been the focus, for example at Facebook, on business outcomes for Black-owned businesses. So, we’ve built product efforts that help give visibility to Black-owned businesses because we felt that the Facebook platform has the opportunity to help improve a situation that was already there.

Joaquin (27:17):

Many times the question when you think about fairness too is one of responsibility. It’s like, okay, people may say, well, but society is biased, and here I am, I’ve built a neutral piece of technology, I don’t have skin in this game. And I think what we’re saying is it doesn’t work that way. Your piece of technology can reflect these biases, it can perpetuate them, it can encapsulate them inside of a black box that legitimizes them or that makes them very difficult to debug or understand. And then on the flip side, technology can also make outcomes better for everybody. But sometimes we have to ask ourselves, is there any group that we’re going to prioritize? That was a lot of words.

Jeremie (28:03):

No. I think it’s hard to answer the question, how is fairness managed at Facebook in a Twitter [inaudible 00:28:10].

Joaquin (28:09):

Right.

Jeremie (28:10):

But I think it’s really interesting the different kind of lenses and different approaches to fairness you’re describing. From a process standpoint, one of the things I’d be really curious about is, where within the organization does the decision with respect to which standard of fairness to apply come from? And do you think that the process for making that decision is currently formalized and structured well enough? Obviously, there’s going to be iteration, but do you feel like you have a good sense for how that process should be structured?

Joaquin (28:42):

Yeah. So, we’re building the process. But what was very clear to us is that it’s a hub-and-spokes model. So, we have a centralized Responsible AI team that is multidisciplinary. It has, as I mentioned earlier, not only AI scientists and engineers, but it also has moral and political philosophers and social scientists. And what that team does is the whole layer cake that ranges from providing an ethical framework for asking some of these questions, so that would be the qualitative part, if you will, then going more into the quantitative part, which is like, hey, how do we …

Joaquin (29:26):

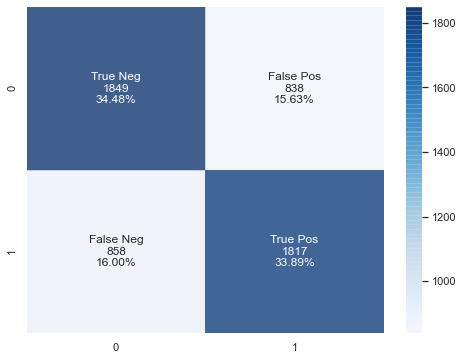

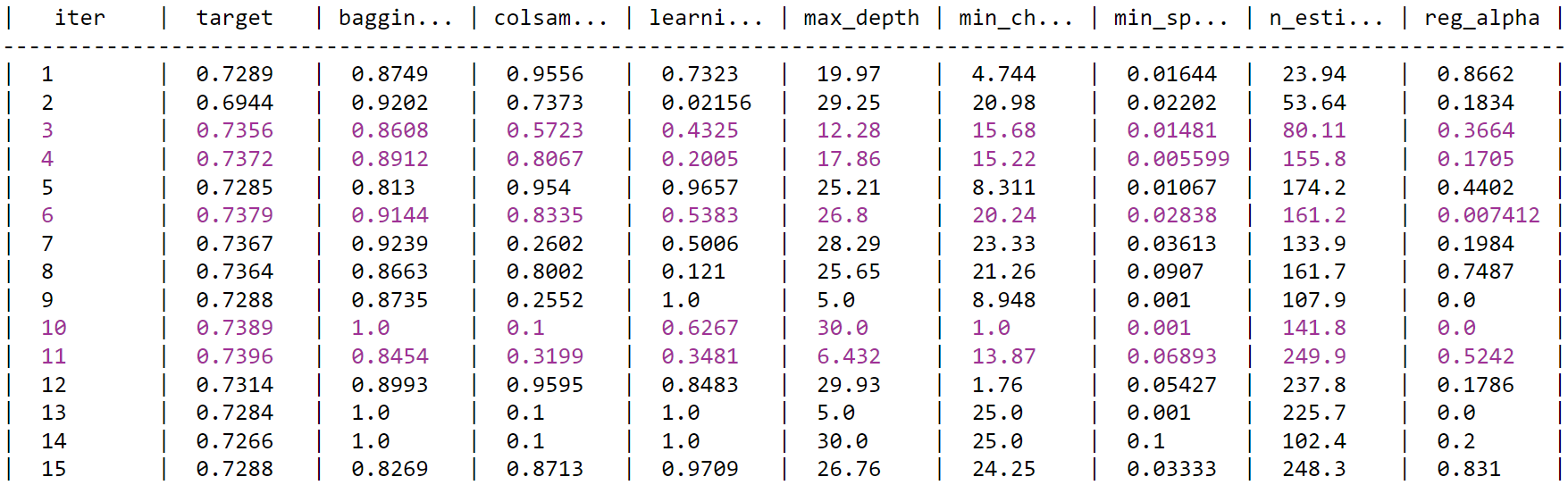

Now you talked about equality versus minimum quality of service. And for equality, you talked about binary classifiers. What does that actually mean in math? What do I look at? Do I look at false positive rates between groups or something like? Answer, no, we don’t necessarily because that has problems. But what do you do?

Joaquin (29:48):

And then there’s another layer beneath this, which is more the platform and tooling integration. Again, leveraging all of the work we had done in Applied ML, how do we make it really easy and really friendly to be able to break down the predictions of the algorithm by groups, et cetera? So, you have this entire layer cake including things like office hours, which are actually really important. It is centralized.

Joaquin (30:15):

And then what you see is that every product team is building their own embedded expert group that will collaborate very closely with a centralized team. And the reason you have to do it this way is that you need the centralized team to have consistent practices, consistent terminology and definitions, but the decisions, to your point earlier, need to be made in context.

Joaquin (30:45):

And so it would be impractical and inefficient to have the centralized team make hundreds of decisions that are very context-dependent. And then the biggest thing is because we said, it’s a process, you don’t make the decision once and you’re done. You keep at it. Right?

Jeremie (31:04):

Yeah.

Joaquin (31:06):

Just as a side note, I watched the movie, Coded Bias, by Shalini Kantayya. It features Joy Buolamwini and Timnit Gebru and Cathy O’Neil and many other brilliant researchers on Responsible AI. And I think it must be really dangerous to try to cite people live and then get the person wrong.

Joaquin (31:32):

One of them, I’m going to keep it at that level of abstraction because I don’t remember who said it, one of them said something like, “Many of these Responsible AI practices, like fairness, they’re like hygiene. It’s like you have to brush your teeth every day.” And so that’s how I think about it. And you have to do it in context of your product. So, we’re seeing an increasing number of product teams build their own embedded effort, and we collaborate very, very closely with them.

Jeremie (32:04):

And how do you decide … So, there’s an almost economic aspect of this that I’m fascinated by. I think I saw Amanda Askell, one of OpenAI’s AI policy specialists, she tweeted about this idea that there’s a motif right now in the AI ethics community that if I can find a reason that an algorithm is bad, then that algorithm simply should not be deployed. There’s that vibe in the zeitgeists to some extent where people will say, “Oh, well, this algorithm discriminates in this marginal way.”

Jeremie (32:33):

And of course, we know all algorithms will inevitably discriminate in some way. So, there’s got to be some kind of threshold of some sort where we say, okay, this is too much, and this is not enough. How do you think about, I guess the economic trade-off from that standpoint? I mean, you could try to debias algorithms until the cows come home, but at some point, something’s got to get launched. So yeah, I guess, how do you think about that trade-off?

Joaquin (32:59):

I think it’s extremely difficult to come up with a purely quantitative answer to that question. I mean, again, as a math and engineering person, I’d love to be able to cost function where I say, if I’m reducing hate speech using AI, the cost of a false positive is $40 and three cents. And so that’s very hard. I think what that touches on, I think is on a few things. It touches, I think on transparency, and it touches on governance.

Joaquin (33:40):

And I think where I believe things are going to go is that the expectation is going to be that when you build AI into a product that you are very transparent about the risk benefit analysis, there’s a phrase that I’m using these days and I’m probably stealing it from someone and I don’t know, this idea of AI minimization, this idea of … In the same way as people talk about data minimization. So, AI minimization. Instead of my instinct being let’s use AI because it’s cool, it should be like, can I do it without AI? And then if the answer is maybe, but then if I put AI, it adds a ton more value for everybody, it’s like, okay, well, that’s justified, I have a value.

Joaquin (34:33):

And then comes the flip side, which is all the list of risks. And one of the risks may be discrimination, or it may be other definitions of unfairness. And I think being transparent about those trade-offs and having ideally like a closed loop mechanism to get feedback and to adjust and see what’s acceptable, I think this is an inevitable future that we will be in.

Joaquin (35:06):

An example that I use a lot is not an AI example, but again, I’ll say it again, many of these problems are not familiar AI problems. We think about content moderation and the difficult trade-off between giving everybody a voice and allowing for freedom of speech, but on the other hand, protecting the community from harmful content. And there is misinformation that is obviously harmful. We’ve come to the firm conclusion that we neither can or should do this ourselves or on our own. And when I say we, I’m of course speaking as a Facebook employee. But I think this is generally true across technology.

Joaquin (35:55):

We’re experimenting with this external oversight board. Maybe you’ve heard of it. It’s a group of experts. It’s taken a lot of work to the team that put them together to come up with the bylaws and how they’re going to operate. But let’s focus on the spirit of it for a second. The spirit of it is that there will be decisions that are tricky. No content policy is ever going to be perfect. And so it’s essential to have humans in development to have a governance that includes external scrutiny and expertise. I think at the end of the day, it’s going to be this interplay of transparency, of accountability, of governance mechanisms that are participatory. Right?

Jeremie (36:45):

Yeah.

Joaquin (36:46):

If you zoom out and you think about AI in 10 years being very prevalent, being very consequential, one big question is going to be one of participatory governance, right?

Jeremie (36:58):

Yeah.

Joaquin (36:59):

How do you or I have a say in where the AI that drives our relatives or our pets to the vet, I mean, that would be pretty phenomenal, like some sort of self driving-

Jeremie (37:11):

And that itself, it’s almost as if it imposes this sort of like energy tax on the whole process where Facebook … I think it’s great that Facebook is doing this. I think it’s great that Facebook has a responsible AI division. And to some degree, it makes perfect sense that it can only happen at scale today because it’s only at scale that companies like Facebook and Google and OpenAI can actually afford to build giant language models and giant computer vision models. And this leads … I wish we had more time for this, but I do have to ask a question about Partnership on AI.

Joaquin (37:43):

Of course, yeah.

Jeremie (37:43):

Because it’s such an important part of this equation. One of my personal concerns is actually, we’ve talked a little bit about this here, but just the idea that there really is safety, privacy, fairness, ethics, all this stuff, they do take the form of what is effectively a tax on organizations that are competitive. I mean, Google has to compete with Facebook. Facebook has to compete with everybody else. It’s a market.

Jeremie (38:06):

And that’s a good thing, but as long as there’s a tax for fairness, as long as there’s a tax for safety, that means there’s an incentive to have some kind of a race to the bottom where everyone’s trying to invest minimally in these directions to some degree. And at least the way I’ve always seen it, groups like Partnership on AI have an interesting role to play in terms of mediating that interaction, setting minimum standards. I guess, first off, would you agree with that interpretation? And second, how do you see Partnership on AI as a bigger role as the technology evolves? Because I know this is a big thing, but I want to make sure I get your broad thoughts on [crosstalk 00:38:40].

Joaquin (38:39):

No, and I’m glad that you brought up Partnership on AI. I am on the board and have been very involved for the last couple of years, and I think it is an organization that the world needs. But I would love your help with understanding the logic behind the race to the bottom hypothesis. And maybe we can make it very concrete by picking any dimension of responsibility that you want. I’m just struggling a little bit. I want to make sure that I really understand what you mean.

Jeremie (39:14):

Yeah, yeah, definitely. So, I’m imagining … Actually, I’ll take an example from the alignment to AI safety community. So, one common one is a hypothesis, I don’t necessarily buy into this, but for concreteness, let’s assume it’s true, that language models can be scaled arbitrarily well and lead to something like super powerful models that are effectively Oracles or something like that. So, they’re essentially unbounding the amount of stuff you can do with super large language models.

Jeremie (39:43):

And to the extent that safety is a concern, you might not want an arbitrarily powerful language model that can plot and scheme. Like you can say, “Hey, how do I swindle like $80,000 from Joaquin?” And the language model goes, “Oh, here’s how you do it.” And yet, companies like OpenAI are competing with companies like DeepMind or whoever else to scale these as fast as they can. Whoever achieves a certain level of ability first, I mean, there’s a decisive advantage in even like six months of the time or whatever.

Jeremie (40:16):

There are analogs for this across, you got fairness, and bias and so on, but there’s always some kind of value that’s lost any time you engage in the company to company competition where you could invest the marginal dollar in safety, or you could invest the marginal dollar in capabilities. And that trade-off is something that … I’m wondering if Partnership on AI could play some sort of role in mediating between organizations setting standards that everybody at least thinks about adhering to, that sort of thing.

Joaquin (40:44):

Yeah. Thank you. I understand the question. The question makes me think a little bit about environmental sustainability in a way, where you could have the same race to the bottom in many ways, not only between companies, but even between countries. It’s like we have one planet. Who’s going to commit to cutting CO2 costs? Like, oh, well, just a second and I’ll be back in a moment.

Jeremie (41:10):

Just after this tree.

Joaquin (41:13):

Exactly. I think that Partnership on AI plays a key role on many fronts. First, being a forum, a very unique forum that brings together every single type of organization you can think of, academia, civil rights organizations, civil society, other kinds of not-for-profits, small companies and big companies. And some of these organizations on Partnership on AI are going to be actively lobbying and shaping and informing upcoming regulation. So, I think there is already a bit of pressure there somehow for big companies to be involved and to lead the way because failing to do so may mean that you have to deal with regulation that is bad for everybody. So, I feel like that’s one angle.

Joaquin (42:16):

The other angle is efficiency. Some of that work that you described, some of that dollar that I could either invest in making sure that my big language models are human value aligned and that they’re not evil, well, if you have an organization that is supported by a large number of for-profit companies and they do the work, projects like ABOUT ML is a project that I love that builds on documentation practices for data sets and for models, so it’s a combination of ideas, like datasheets for datasets and model cards and things like that, these are extremely powerful.

Joaquin (43:04):

And we at Facebook are definitely paying a huge amount of attention and experimenting with that. And I’m glad that we can use something, A, relatively off the shelf, but very [crosstalk 00:43:16], that we didn’t have to build ourselves, and for extra points. That gets the validation and credibility that you get from it being built by an organization like Partnership on AI, that doesn’t represent just our interests. And I think that that’s going to be a common pattern.

Joaquin (43:33):

That thing of, A, the 360 view that you get by having a multi-stakeholder organization that has everybody. Second, just the efficiency and convenience of having best practices and recommendations that come from people who really know what they’re doing, and where you know that everybody was looking while this was being created. And then the third one is really the legitimacy and validation that you get by using something that, hey, these are not like the ethical principles of Facebook. Right?

Jeremie (44:06):

Yeah.

Joaquin (44:06):

I mean, I would understand if people frowned and people were like, “Oh well, I mean, what interests are represented there?” Only Facebook’s, versus it coming from The Partnership on AI.

Jeremie (44:17):

Yeah. I mean, it’s one of those organizations where I’m hoping to see a lot more of them in the future. And it seems like the kind of thing that the nascent kind of kernel of that organization is hopefully going to keep blossoming because as you say, we need some kind of multi-partisan oversight of this space at so many different levels. And it’s great to see the initiative taking shape. Joaquin, thanks so much for your time. Actually, do you have a place where you like to share stuff, like Twitter in particular, or a personal website that you’d like to point people to if they want to find out more about your work?

Joaquin (44:49):

Oh, I have a confession. So, I’m a late Twitter adopter.

Jeremie (44:58):

Oh, that’s probably good for your mental health.

Joaquin (45:03):

But I now feel a strong sense of responsibility to be contributing and engaging in the public debate [inaudible 00:45:14] might be. I’m going to embarrass myself so much right now by having to even look up my Twitter. On Twitter, I am @jquinonero. So, it’s letter J and then my first last name. It’s complicated. I have two last names because I’m from Spain.

Jeremie (45:33):

We can share it.

Joaquin (45:34):

We have a … Exactly. Yeah, yeah. I have made a personal commitment to engage more, and I’m going to have to learn how to do this in a way that doesn’t disrupt my mental health like you were saying.

Jeremie (45:48):

Yeah. I think that’s one of the biggest challenges, for some reason, especially I find with Twitter is keeping that mindful state and not getting lost in the feed. Boy. Anyway, we’re in for a hell of a decade. But really appreciate your time on this. Thanks so much, and really enjoyed the conversation.

Joaquin (46:05):

Thank you so much, Jeremie. It’s an absolute pleasure to be here. And thank you for doing this podcast, and thank you for getting everybody involved in these kinds of conversations.

Jeremie (46:17):

My pleasure.